With AI text generation growing fast, a lot of people wonder: Is it even possible to reliably detect machine-written content? The short answer: yes, but only to a point.

Detection is probabilistic, not certain. There are patterns and tools, but also workarounds, false flags, and evolving models that make detection tricky. Let’s dig in.

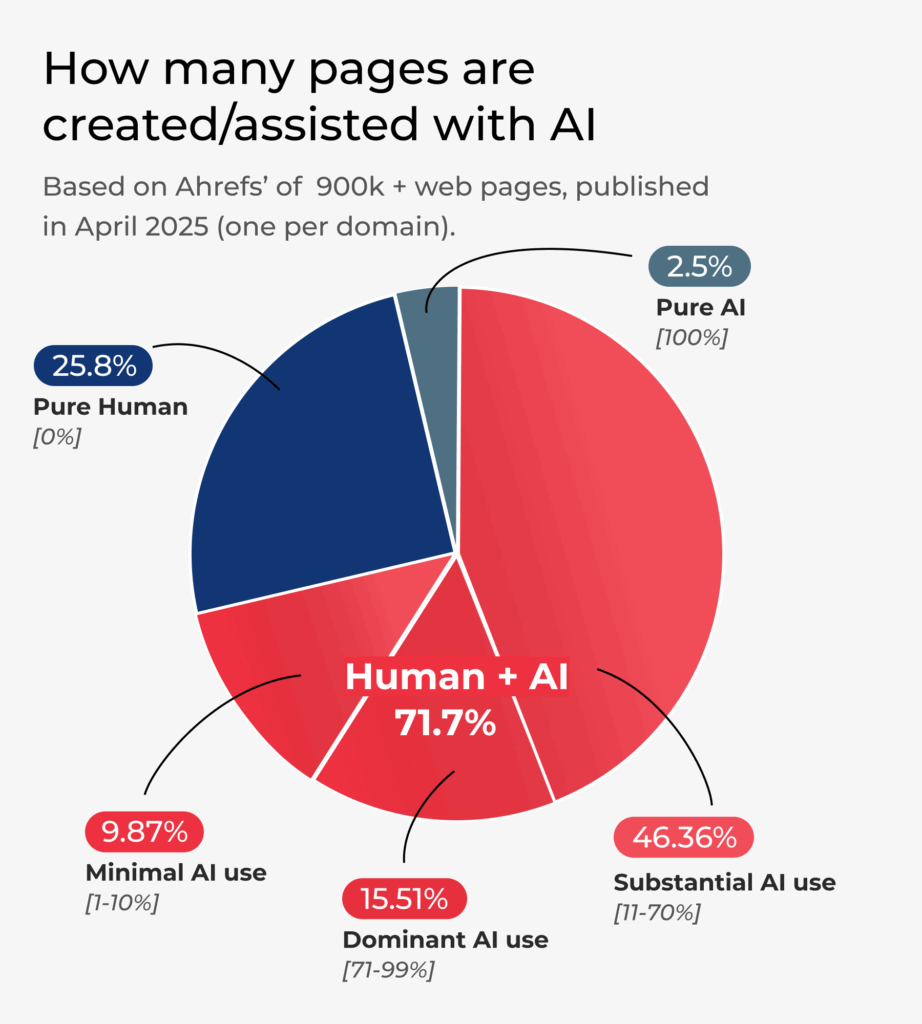

Recent Data: How Prevalent is AI Content?

In April 2025, Ahrefs analyzed 900,000 new English-language web pages (one per domain) and found that 74.2% had some AI-generated content.

Another study in educational integrity showed that detection tools are much more accurate at spotting content from older AI models (e.g. GPT-3.5) than more advanced models (GPT-4), and that false positives occur when human texts are unusual or edited with AI aids.

In testing of free vs premium detectors (Scribbr, QuillBot, etc.), premium tools often reached ~80-84% accuracy on mixed samples; free tools somewhat less.

These numbers show two things: AI content is common now, and detection tools are getting better, but they’re far from perfect.

What Patterns and Signals Do Detection Tools Use?

Detection tools generally rely on statistical, linguistic, and sometimes engineered watermarking or metadata indicators. Here are some of the common features:

| Feature | What it is | Why AI might show it |

| Perplexity / Predictability | Measures how predictable the next word is given prior context | AI often produces more “statistically likely” continuations, so lower perplexity in some models |

| Burstiness / Sentence-Length Variation | Variation in sentence length, structure, etc. | Human writing tends to vary more; AI might produce more uniform patterns unless explicitly prompted otherwise |

| Common phrase / n-gram repetition | Reuse of common sequences, clichés, or formulaic phrasing | AI models trained on large corpora may favor certain frequent patterns resulting in detectable repetition |

| Vocabulary richness & lexical diversity | How many rare words, varied synonyms are used vs common ones | Human authors often bring in unique or varied vocabulary; AI may default to safer/common vocabulary unless pushed otherwise |

| Stylistic quirks, mistakes, or human idiosyncrasies | Typos, colloquialisms, incomplete thoughts, digressions, emotional tone etc. | AI tends to smooth over such quirks, producing more polished text (though newer models sometimes mimic them) |

| Watermarking / embedded signals | Hidden or explicit signals included in the generation process by model developers | If implemented, these are reliable for detection, but only if access to those signals exists and they haven’t been stripped or paraphrased away |

AI Tools You Can Use (and How Well They Perform)

Here are some detection tools, what they promise, and what studies find:

| Tool | Strong points | Limitations / Observed Weaknesses |

| Turnitin’s AI Detector | Widely used in academia; claims ~98% accuracy under certain conditions. | Less effective when AI text is heavily edited or human-augmented; also some risk of false positives. |

| QuillBot’s AI Detector | Includes analyses that can highlight which parts might be AI-generated; good for edited or paraphrased content. | Can misclassify text that’s just polished or refined with grammar tools; difficulty when human & AI portions are blended. |

| Scribbr (premium + free) | Among top in independent tests; premium version ~84% accuracy in mixed test sets. | Even the best tools drop in performance on specialist topics or heavily paraphrased content; cost can be a barrier. |

| Sapling, Copyleaks, GPTZero, ZeroGPT, Winston AI | Some have good accuracy, plus usability, user interface, batch scanning etc. | Sometimes large false positive rates; also performance depends on the AI model being checked against. For example, detection of GPT-4 content is more difficult. |

Limitations of AI Tools

Even the best tools have blind spots or places where they struggle. Here are some limitations:

- Edited or “humanized” AI content: If someone takes AI output and changes it. paraphrases, rewrites sections, adds unique voice — detection becomes much harder. Some tools drop from, say, 70.3% down to much lower detection if paraphrasing is aggressive.

- Advanced models: As models improve (e.g. GPT-4, Gemini, etc.), their output becomes more humanlike. Tools trained mostly on earlier model outputs may misclassify. Studies show higher false negatives for newer model text.

- Topic/domain specificity: Technical, specialized, or niche content (legal, medical, academic writing) can confuse detectors, less common structures or vocabulary might look “odd” to the tool.

- False positives: Human text being flagged as AI-generated. For example, non-native speaker’s writing, concise writing, or very polished text might trigger false alarms. Tools often have to balance sensitivity vs specificity.

- Adversarial attempts: Tools or humans that try to evade detection (through paraphrasing, masking, mixing human & AI, etc.) are already causing problems.

What to Do If You Need to Judge AI vs Human Content

Because detection tools are imperfect, here are some best practices and strategies to fairly and effectively detect AI-generated text.

- Use multiple tools: Don’t rely on just one detector. If several tools agree, more confidence; if they disagree, investigate further.

- Look at context and metadata: Who it, when, for what purpose? Is the style consistent with other known writings by the same author? Did it suddenly become much more polished or generic?

- Check for unusual consistency: Too uniform tone, vocabulary, sentence structure without variation, or lacking errors might tip off AI use.

- Spot the “generic content” trap: AI often produces content that is broadly correct and polished but somewhat generic, lacking in personal anecdotes, domain-specific insight or mistakes that humans naturally make.

- Ask for drafts or revisions: In academic or workplace settings, having a process that includes showing earlier drafts can reveal human involvement.

- Use detection + human judgment: Especially in high-stakes situations (academia, legal, publishing), combine tool results with human editors, peer review, or interviews.

- Transparency policies & labeling: Organizations can adopt policies that require disclosures when content is generated or assisted by AI. It helps build trust and makes detection less adversarial.

- Train detection tools regularly: Make sure tools get updated for the newest AI models and that they are recalibrated with recent data.

How to Think About AI Detection

Don’t expect magic. No tool is 100% accurate. Always treat detection as one input, not the final verdict. Detection works best when AI content is unedited, from older models, or when you have good baseline data to compare with.

The “arms race” is real: As detection improves, model-developers and users find new ways to evade detection. So staying updated is crucial.

False positives can harm people; accusations of misuse of AI need to be handled carefully. Conversely, unchecked AI content can erode trust, misinform, or devalue human-created work.

Maintain Human Oversight

AI should complement human creativity, not replace it. While AI tools are excellent at processing large amounts of data and producing text quickly, human insight and judgment are still crucial.

- Use AI as a Tool, Not a Replacement: Ensure that AI is used to assist in the creative process rather than entirely taking over content creation. Writers, educators, and creators should focus on how AI can support their work, not replace the intellectual input of humans.

- Example: AI can be used to draft initial ideas or generate first drafts, but human authors should be responsible for refining the tone, adding insights, and ensuring accuracy.

- Human Quality Control: Always have humans review AI-generated content to check for quality, relevance, and accuracy. Relying solely on AI without human oversight could lead to the spread of misinformation, especially if the AI makes errors or lacks nuance in its analysis.

Avoid Misuse and Manipulation of AI

One of the most pressing ethical concerns is the potential for AI to be used in misleading or deceptive ways. From generating fake news to impersonating people’s writing styles, AI can be a tool for manipulation if used unethically.

- Prevent the Spread of Misinformation: AI should not be used to produce content that misleads, deceives, or manipulates public opinion. Content generated by AI should always be truthful and reliable.

- Example: AI should not be used to fabricate stories, create fake reviews, or produce biased political content.

- Establish Ethical Guidelines for AI Use: Organizations and individuals should create strict ethical guidelines for AI content usage. For example, a publishing house could have a rule stating that AI tools cannot be used to generate politically sensitive articles without clear editorial oversight.

- Protect Individual Identity: Be mindful of privacy and identity concerns when using AI to mimic writing styles. Unauthorized replication of someone’s tone, voice, or personal brand could be considered an invasion of privacy or intellectual property theft.

Fostering an Ethical AI Future

To ensure that AI benefits society without compromising ethics, it’s essential to remain mindful of transparency, accountability, and fairness. By establishing guidelines and best practices, we can promote responsible AI use, enhance human creativity, and build trust with audiences.

By focusing on innovation and integrity, we can harness AI’s power while safeguarding ethical standards for generations to come.

Want to read more articles like these? We post regularly on our socials, follow us to stay updated: X, LinkedIn and Facebook.