Large language models (LLMs) like ChatGPT, Gemini, and Claude aren’t just tools for quick answers anymore, they’ve become powerful gateways to information, drawing in billions of visits every month. For marketers and SEOs, this shift signals a major change: audiences are no longer just searching on Google, they’re asking AI directly.

This creates a new challenge. Instead of competing for blue-link rankings on a search results page, brands now need to figure out how to be included in conversational answers generated by AI.

In this article, we’ll break down what LLM SEO means, why it matters, and the strategies you can use to ensure your brand is visible when people turn to AI for answers.

What is LLM SEO?

LLM SEO is the practice of optimizing content and brand signals so that large language models reference, cite, or recommend your business when responding to user queries.

Unlike Google, where multiple pages compete for clicks, LLMs generate one synthesized answer. If your brand isn’t included, you’re invisible.

Why LLM SEO is Different From Traditional SEO

- Fewer results, higher stakes: Instead of 10 blue links, AI gives a single summarized response.

- Citation-based visibility: Some AI tools (like Perplexity or You.com) show sources, others don’t. Visibility may mean being cited or simply being embedded in the model’s knowledge.

- Conversational relevance over keyword matching: LLMs interpret natural language, so exact-match keyword stuffing won’t help.

If you want LLMs to mention your brand, you’ve got to understand the three places they look for answers and how each one rewards different kinds of content. Below, I’ll walk you through each source in conversational, practical terms and end with clear takeaways you can act on today.

1) Training data – the long memory of the internet

Think of training data as the giant brain dump. Models like GPT are trained on massive collections of text: web pages, books, code, forums, licensed datasets, and human-created transcripts. That training teaches the model language patterns, facts, and common ways people ask and answer questions.

What that means for you:

- Historical content matters. If you published an authoritative guide two years ago and it got traction, that content can be embedded in the model’s knowledge and influence future answers, even if no one links to it today.

- Stale vs. evergreen: Because many models are trained on a snapshot of the web, they can get out-of-date. Evergreen, well-cited content fares better over time.

- Quality beats trickery: Models learn from patterns. Clear, well-written explanations and factual data are more likely to be reproduced by the model than keyword-stuffed fluff.

- You can’t rely on training alone: If you launched a new product this week, a model trained months ago won’t “know” it unless the model has a way to access live data (more on that next).

Keep cornerstone content up to date, include clear summaries and data callouts, and publish original research that other sites can cite, that’s the kind of content that gets baked into a model’s “memory.”

2) Connected search and retrieval: live web access and RAG

Some LLM products don’t just rely on their baked-in knowledge. They use live web search or a retrieval layer to pull fresh documents while answering queries, this is often called Retrieval-Augmented Generation (RAG). In practice that means the model searches the web (or a curated index), pulls relevant passages, and then composes an answer using those sources.

Why this matters:

- Fresh content gets traction fast. If an LLM is using live search, newly published pages that match a query can be cited or used in the generated response.

- How extraction works: The model prefers clear, extractable chunks: headings, short summaries, bullet lists, tables, and labeled sections, because those are easy to pull into an answer.

- Technical gatekeepers matter: If your page is blocked from crawling, slow, or hidden behind heavy JavaScript, a retrieval layer may miss it entirely.

Make sure your critical pages are crawlable and fast. Add clear H2/H3 headings, short summary boxes, and an FAQ section so a retriever can easily surface a tidy snippet from your page.

3) Authority and structure: why format and credibility are currency

Even when an LLM can access many pages, it has to decide which ones to trust and cite. That’s where signals of authority and well-structured content come in.

What signals help:

- E-E-A-T / trust signals: Author bios, credentials, citations, transparent methodology, and data sources all increase your chance of being considered authoritative.

- External validation: Backlinks, mentions in reputable publications, quotes in industry reports, these are the signals that tell models (and the humans designing them) that a source is trustworthy.

- Structured markup: JSON-LD schema (Article, FAQ, Product), clear meta information, and machine-readable data make it easier for systems to understand the meaning of your content.

- Readable structure: Headings, lists, and short paragraphs help both retrievers and humans quickly grasp what your page is about.

Add structured data (FAQ, Article, HowTo, Product where relevant), publish author bios with credentials, and pursue digital PR that gets your content cited on high-authority sites.

How Much Traffic Do LLMs Get?

LLM-powered chatbots are drawing a huge audience online. According to SimilarWeb data from May 2025, these channels collectively saw over 7 billion visits. According to Search Engine Land: ChatGPT usage remains massive, but its lead is slowly eroding. After dipping from 87.5% to 77.6% three months ago, the platform has since leveled out, holding steady at around 79% of traffic share. The surge shows just how much people are turning to AI for answers, conversations, and information every day.

What Are The Different Types of LLMS?

Large Language Models (LLMs) come in different shapes and sizes depending on their purpose, architecture, and training. Here’s a simple way to categorize them:

Open-Ended Chatbots

These LLMs, like ChatGPT, Claude, or Google Gemini, are designed to have conversations, answer questions, and generate text in a natural, human-like way. They can handle a wide range of topics and are usually trained on vast amounts of general knowledge.

Instruction-Following Models

These are fine-tuned to follow specific instructions. They excel when you give them clear prompts like “Write a summary” or “Generate a product description.” ChatGPT’s instruction-tuned versions fall into this category.

Specialized Domain LLMs

Some LLMs are trained specifically for certain industries or topics, like legal, medical, or technical fields. They understand domain-specific terminology and provide more accurate answers in that area. Examples include LegalBERT or MedPaLM.

Multimodal LLMs

These models can handle multiple types of input, not just text. They can process images, videos, or audio alongside text to generate richer responses. GPT-4 with vision capabilities is a prime example.

Generative LLMs for Content Creation

These focus on generating creative content such as stories, code, marketing copy, or poetry. They are optimized for producing text that reads naturally and is engaging.

Retrieval-Augmented LLMs (RAG)

These models combine LLMs with external databases or knowledge sources. They fetch relevant information in real-time to give more accurate or up-to-date answers. Examples include Perplexity AI and certain enterprise AI assistants.

Case in point: “best CRMs for small business”: why HubSpot, Zoho, Salesforce show up

Run that query in an LLM-powered tool that uses live sources, and you’ll commonly see HubSpot, Zoho, and Salesforce mentioned. Here’s the practical why:

- Comprehensive content: These companies publish detailed buyer’s guides, feature comparisons, pricing breakdowns, and use-case pages that directly map to user intent.

- Structured pages: Their content often includes clear headings, comparison tables, pros/cons, and FAQ sections, exactly the kind of text a retrieval system likes to extract.

- External citations: They’re widely covered by review sites, publications, and third-party roundups. That external footprint is what turns a brand from “a page on the web” into an authority signal.

- Up-to-date info: These brands refresh product docs and publish news/announcements, making them discoverable as fresh sources when a model looks for current info.

If you want to be included in those answers, replicate what they do: build comparison pages that directly answer search queries, include structured summaries and tables, and get your brand mentioned on reputable third-party sites.

Quick checklist: what to do tomorrow

- Audit crawlability: ensure key pages are indexable, fast, and not blocked by robots.txt.

- Add extractable blocks: start every long post with a one-paragraph summary and include bullet-point “key takeaways.”

- Deploy schema: add FAQ, Article, Product, HowTo JSON-LD where appropriate.

- Publish original data: even small surveys or case studies make you a source worth citing.

- Earn external mentions: target one high-authority roundup or guest post each quarter.

- Test manually: ask LLMs your target questions and see who gets cited — then reverse-engineer their content structure.

Case Studies: Who’s Winning in LLM SEO?

1. HubSpot in Marketing Queries

HubSpot has been a household name in traditional SEO for years, but their success now extends into the world of AI as well. Why? Because they’ve mastered the art of creating educational, evergreen, and well-structured guides.

If you ask ChatGPT something like “What are the best inbound marketing strategies?”, there’s a good chance HubSpot will pop up in the response even when there’s no direct citation.

Content that is timeless, clearly structured, and genuinely helpful doesn’t just rank on Google, it sticks in the “memory” of AI models, making your brand a go-to reference.

2. Zapier in Workflow Automation

Zapier has built its SEO presence around solving very specific, everyday problems. Instead of just targeting broad keywords, they answer conversational, long-tail queries such as “how to automate email follow-ups”.

This style of content fits perfectly with how people talk to AI tools. It’s no surprise that ChatGPT and Claude often mention Zapier when recommending automation platforms.

Think like your audience. Create content that answers real, question-based prompts, and you’ll increase your odds of being mentioned by LLMs as a trusted solution.

3. Perplexity Citing Forbes and TechCrunch

When people ask Perplexity for product comparisons or industry news, it often cites heavyweights like Forbes and TechCrunch. These outlets win because they consistently publish authority-driven, data-backed journalism with clear sources, exactly the kind of content LLMs trust and prefer to cite.

Authority still matters. Invest in thought leadership, digital PR, and data-backed storytelling if you want your brand to not just appear in AI answers, but also be directly cited as a source.

Key Strategies for LLM SEO

1. Become the source that people and AI both trust

If you want your brand to show up in AI-powered answers, the first step is to become a trusted source of truth. LLMs are trained to favor content that looks credible, well-researched, and backed by expertise. That means surface-level blog posts won’t cut it anymore.

Here’s how you can build authority that LLMs recognize:

- Publish original research or data reports. Numbers and insights that only your brand can provide make your content more valuable.

- Include expert commentary. Quoting industry professionals (internal or external) adds an extra layer of trust.

- Focus on E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness). This isn’t just a Google thing, AI models also rely on signals that suggest your brand knows its stuff.

For Example: Ahrefs is a perfect case study here. Their blog doesn’t just cover generic SEO tips; it regularly publishes data-heavy studies, like analyzing billions of search queries or backlink profiles. Because of this, they’re not only a top result in Google searches but are also frequently referenced in AI-generated responses.

2. Write content the way people actually ask questions

Traditional SEO often focused on keywords like “CRM software” or “email automation tool”. But in the age of LLMs, users don’t just type keywords, they ask full questions: “What’s the best CRM for small businesses?” or “How do I automate follow-up emails in Gmail?”.

To show up in those answers, your content needs to match the way people naturally speak to AI tools.

Here’s how to do it:

- Structure content around “how,” “why,” and “what” questions. These are the exact types of queries users ask in ChatGPT, Perplexity, or Gemini.

- Add FAQ sections. Break down common user questions and provide short, direct answers that LLMs can easily extract and reuse.

- Use a clear, educational tone. Think of it as teaching a beginner rather than impressing an expert—AI models prefer well-explained content that’s accessible to everyone.

Zapier nails this with its massive library of “How to” guides. Instead of writing vague blog posts, they create step-by-step tutorials like “How to automatically send Slack messages from Google Sheets.” Because this mirrors the way people actually phrase their problems, LLMs naturally pull Zapier into the conversation when someone asks about workflow automation.

Why it works

AI models are trained on conversational data, so when your content sounds like the questions people actually ask, you increase the chances of being surfaced in AI-powered answers.

3. Do not just chase keywords, build depth around topics

Search engines and AI models no longer look at content in isolation. Instead of rewarding single pages that repeat a keyword, they prioritize websites that cover a topic thoroughly from multiple angles. This means your strategy should shift from chasing individual keywords to building topic depth.

One of the best ways to do this is through topic clusters. Start with a central, in-depth guide that acts as the hub (for example, The Complete Guide to CRM Software). Around that hub, create related pages that dig into specific aspects such as pricing, integrations, comparisons, or use cases. When you connect these pieces through smart internal linking, you show both search engines and LLMs that your brand understands the subject in full.

Another layer of support is schema markup. While it is not always required if your structure is clear, schema provides AI models with signals about the type of content you are publishing—whether it is a product review, a comparison, or a how-to guide. This extra context helps your pages become more “machine-readable.”

4. Create structured and digestible content

When people read online, they skim. AI models are no different. The easier your content is to scan and break down, the more likely it is to be pulled into an AI-generated answer. That is why structure is just as important as substance.

Make your content simple to parse by using bullet points, numbered lists, and short paragraphs. Break complex ideas into clear definitions so that industry terms are not left unexplained. And always add summaries or key takeaways at the beginning or end of your articles—this not only helps readers but also gives AI models clean snippets to reference.

Example: Forbes often includes “Key Points” sections at the top of their articles. This structured approach makes it easier for tools like Perplexity to extract and cite them directly in answers, increasing their visibility in AI-powered search.

Why it works:

Structured content acts like a roadmap for both humans and machines. When your ideas are easy to digest, you make it frictionless for AI to recognize your brand as a credible, quotable source.

5. Build Citations and Mentions Across the Web

LLMs do not rely only on your own website, they look at how often your brand shows up across the wider web. If your company is consistently cited in reports, reviews, and roundups, AI tools are more likely to consider you a trustworthy answer.

Start with the basics by earning backlinks from authoritative domains, but do not stop there. Focus on getting your brand name into industry conversations, whether through guest posts, expert commentary, or inclusion in comparison articles. A well-planned digital PR campaign can help you secure mentions in high-profile publications and niche industry sites alike, expanding your footprint where LLMs are gathering data.

Example: When you ask ChatGPT about the best project management tools, Asana almost always appears. This visibility is not just because of Asana’s own blog content. It is also because Asana is frequently cited in third-party comparisons, reviews, and roundups across the web. Those external mentions act as authority signals that LLMs recognize.

Why it works:

The more your brand is referenced outside your own site, the more it becomes part of the “shared knowledge” that LLMs pull from. Think of it as digital word-of-mouth—consistent mentions build trust and visibility in both human and machine-driven recommendations.

Challenges in LLM SEO

Cracking LLM SEO is exciting, but it is not without its hurdles. Unlike Google, where we at least get some insight into ranking factors, AI-powered search works in ways that are far less transparent. Here are a few of the biggest challenges brands face:

Opaque algorithms

With Google, SEOs have years of experience, data, and even official guidelines to lean on. LLMs are different. They do not reveal how they decide which sources to trust or which brands to include in their answers. This makes optimization less predictable and more experimental.

Attribution gaps

Your content might shape an AI’s response, but you may not always get credit for it. In some cases, the model will mention your brand directly, but in many others, your insights could be paraphrased without a citation. That makes visibility harder to measure.

LLMs are not static. They are retrained and updated regularly, which means what works today might look very different in six months. Brands need to treat LLM SEO as an ongoing process, testing and refining strategies as the technology evolves.

Constant evolution

The takeaway: LLM SEO is still new territory. The rules are not fully written yet, but the brands that experiment early will be in the best position to adapt and capture visibility as these models mature.

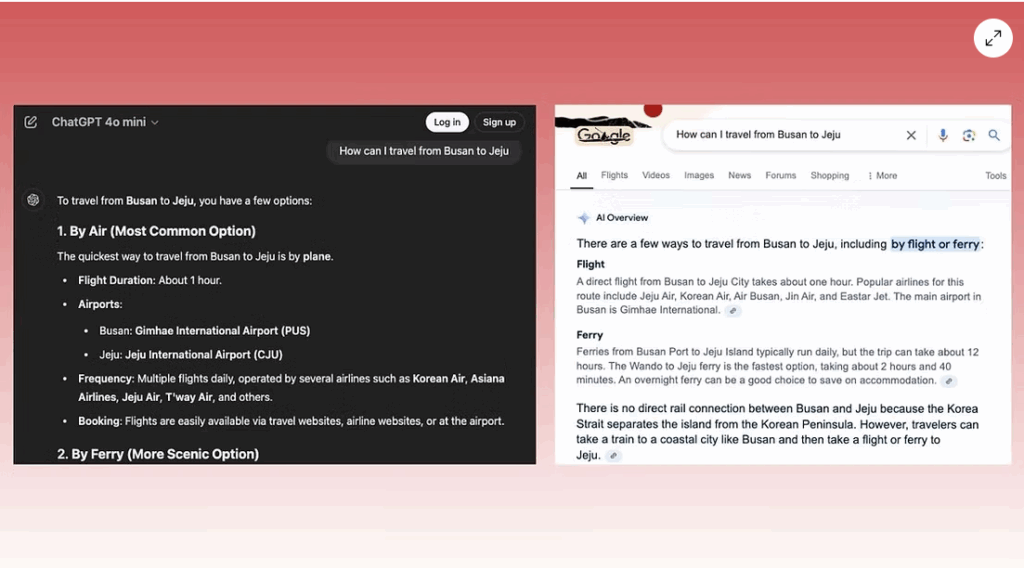

Is Generative Engine Optimization the Same as AI Overview Optimization?

Not exactly, though they sound similar. Think of it this way: Generative Engine Optimization, or GEO, is about making your content easy for AI tools to use when they generate answers. It’s like giving AI all the right building blocks so it can create helpful, accurate responses for users.

ChatGPT results (left) and a Google AI overview (right) for the same query ([How can I travel from Busan to Jeju]).

AI Overview Optimization, or Answer Engine Optimization, is a bit different. It’s about making your content clear and well-structured so AI can understand it and summarize it correctly. The focus here is on being easy to read, organized, and concise, so your content gets picked up as a top answer or snippet.

So while both GEO and AEO are about getting your content noticed by AI, GEO is more about helping AI generate content, and AEO is about helping AI understand and summarize it. Together, they make your content much more likely to show up in AI-powered search and be remembered by users.

The Future of LLM SEO

We are only at the beginning of what AI-powered search will look like. As large language models continue to evolve, the way brands approach visibility will change just as dramatically as it did in the early days of Google. Here is what to expect:

AI-first content strategies

Instead of optimizing around keywords alone, brands will start shaping content based on how people phrase conversational prompts. The focus will shift to anticipating the exact questions users might ask tools like ChatGPT, Gemini, or Perplexity.

Paid placements inside LLMs

Just as Google eventually introduced ads, it is likely that AI platforms will roll out paid inclusion models. Brands may soon compete for sponsored spots in AI answers, making a mix of organic and paid LLM visibility part of the marketing playbook.

A stronger focus on authority

With rising concerns about misinformation, AI tools will lean more heavily on trusted brands and authoritative data sources. This means credibility, expertise, and brand trust will matter more than ever for appearing in answers.

The takeaway: The future of LLM SEO will look less like a ranking game and more like a competition for trust, authority, and conversational relevance. Brands that start preparing now will be ready when AI-powered search becomes a mainstream discovery channel.

Conclusion

LLM SEO is all about meeting your audience where they are: inside AI tools and conversational platforms. It’s not about abandoning traditional SEO, but about making your content easy for AI to find, understand, and use.

By focusing on clarity, trustworthiness, and structured content, you increase the chances your brand will be surfaced, cited, and remembered.

The brands that start optimizing for AI now will gain a visibility edge that others will struggle to catch up with, turning AI-powered search into a real growth opportunity.

Ready to put LLM SEO into action? DigiXL Media is here to help. Learn how to spot which posts need attention, refresh them effectively, and keep your content driving traffic and engagement.

Don’t forget to follow our socials to stay updated! X, LinkedIn and Facebook.